You don’t need more data. You need perspective.

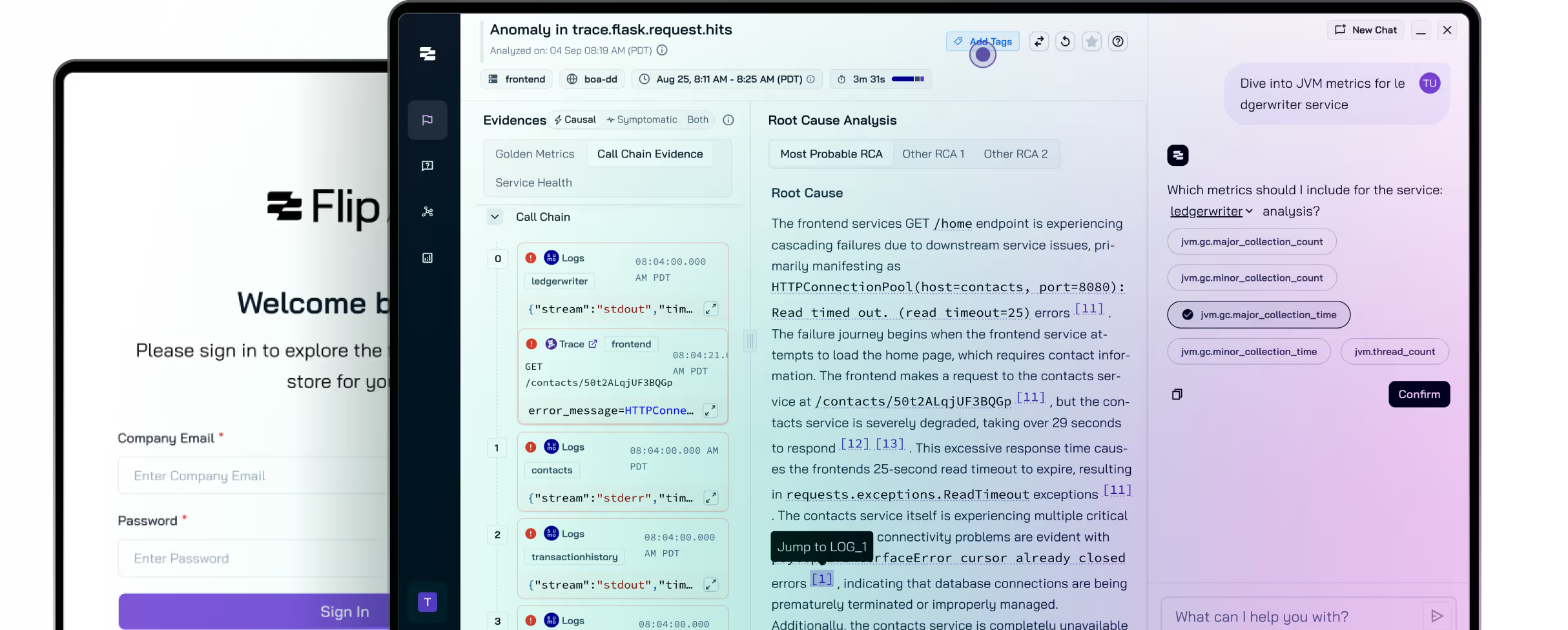

Flip AI is the first AI copilot for SREs, cutting through observability noise and giving teams perspective on RCAs in seconds.

What Flip Solves

Your team is flooded with telemetry.

But when things break, answers are still hard to find.

Observability tools have multiplied. With each new dashboard, alert, or log, clarity has become harder to achieve. Flip bridges the gap between data and understanding by delivering contextual, explainable insights to root cause analysis right when you need it. It’s not about seeing more. It’s about gaining a better perspective.

From Signal to Story, in Seconds

Flip transforms telemetry data into clear and credible narratives that align teams, build trust, and move work forward, quickly.

Plug into Your Stack

Flip integrates with the tools you already use: Datadog, Splunk, AppDynamics, and more.

Connect the Dots

Flip analyzes telemetry, architecture, and behavioral patterns to surface connections that matter.

Learn from the Past

Historical incidents and tribal knowledge train Flip’s models in your real-world context.

Deliver Real Answers

Natural language root cause analysis makes every incident faster to triage and easier to explain.

Signal-Based Root Cause Analysis. Cuts through the noise and highlights only what matters.

Timeline-Based Investigation. See how incidents unfold across your systems in real time.

Context-First Alerting. Alerts that tell you what broke, why, and what to look at next.

Adaptive Analytics. Learns your architecture, your tools, and your norms to improve over time.

Works With What You Already Use

Flip is plug-in ready for Datadog, Splunk, AppDynamics, and more.

Flip acts as a contextual intelligence application that learns from your environment without demanding changes to your existing observability stack. Rather than replacing your tools, it connects them, ingesting data from platforms like Datadog and Splunk, to offer deep context and surface meaningful insights when and where they’re needed. Whether you use open source telemetry or enterprise-grade monitoring suites, Flip adapts to your setup, learns how your systems behave, and continuously refines its output to reflect the realities of your architecture. It offers perspective to observability, right out of the box.

Enterprise-Grade Security from Day One

SOC2-compliant and designed for privacy-first deployments

Flip is engineered for high-trust environments where privacy and compliance aren’t negotiable. Its read-only data access, minimal footprint, and flexible deployment options reflect a deep understanding of what enterprise teams in finance, B2B SaaS, and critical infrastructure demand. For Flip, security and compliance are foundational.

Created by SREs Who’ve Been On-Call at 2AM

Flip is the result of late-night pages, protracted downtime, and years spent battling complexity at scale. The engineers behind Flip know what’s broken in observability because they lived it. That’s why every feature is built to earn trust from skeptical, overloaded teams who need answers that work in the moment.

Questions you may have

How long does setup take? Most teams are up and running in under a day.

What tools does Flip integrate with? Flip supports Datadog, Splunk, Prometheus, New Relic, OpenTelemetry, and more.

Is there a free trial? Yes. You can start a 14-day trial with full product access.

What about data privacy? Flip is read-only by default and never stores sensitive data unless explicitly configured.

How does pricing work? We offer self-serve and enterprise plans. See the pricing page for details.